Our team looks after the cross-government publishing API application and we were recently tasked with improving how content dependencies are managed.

On the GOV.UK website, any piece of content may hold links to related articles or translations. In each case there’s a dependency between the content linking out and the linked content.

The old way of managing dependencies

The new publishing API is being developed as a single method of publishing for all of our applications including Whitehall, Specialist Publisher and Travel Advice Publisher.

The first part of the platform to be built was the content-store, which serves as a central repository for all published content. Items in the content-store database used to be stored with full details of their own content, and links to other items were represented as IDs (the links weren’t expanded and stored inside the content).

The content-store expanded these links at run time, converting the IDs into text and URLs, but this lead to long request times and scaling problems. Because the content-store had a lot of complexity, we wanted to turn it into a simple caching layer with no responsibility for determining links or storing content dynamic information. The new publishing API was to be the canonical source of content and we just needed some way of updating content whenever links changed (as this would no longer be determined by the content-store at run time).

Our new solution

We now update linked content automatically within the publishing API through the use of LinkSets. The LinkSets ensure that when you make a query, the publishing API can immediately find the parent content and its dependencies. The LinkSet identifies all the relations between articles, like vertices in a graph, and is saved within PostgreSQL (the publishing API is backed by a PostgreSQL database). We create a LinkSet for each article before it’s published.

The content store is to be a simple cache, storing no details of content of link IDs.

An example of how this works in practice

Say an ‘income tax’ article has a parent article called ‘money and tax’, and a child called ‘tax codes’. Meanwhile, the ‘tax codes’ content has a child article of ‘tax numbers’. Furthermore, the ‘income tax’ content links to an ‘emergency tax’ article this in turn links back to ‘income tax’.

If we updated the ‘income tax’ content to be called ‘contributions’ instead, we’d want to notify ‘tax codes’ and ‘emergency tax’ of this change. We’d also have to update the ‘tax numbers’ and ‘money and tax’ articles since the breadcrumb will have changed to:

`Money and tax > Contribution > Tax Codes > Tax Numbers`

There are two sides to the query, one for following dependencies down the graph, and one for following it up. In the following query example, I’ll just be showing how we followed dependencies down the graph (since following dependencies upwards is a very similar process).

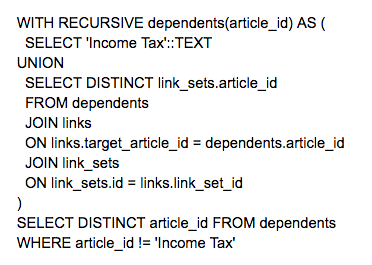

In PostgreSQL the query you’d make to find all the (downwards) related content (even if not directly related) for the ‘income tax’ content would look like this:

The system’s response to the query would be to first create a temporary table called dependents that just includes the ‘income tax’ article:

article_id

Income tax

The second step would find all the links that point to the ‘income tax’ article (in this case the ‘tax codes’ and ‘emergency tax’ content), and save them as a temporary table, eg as:

article_id

Income tax

Tax codes

Emergency tax

Then the system would look for all the links that point to the table’s contained articles (in this case the ‘tax numbers’ content). This then gives a temporary table of:

article_id

Income tax

Tax codes

Emergency tax

Tax numbers

As the final step, all values not including the ‘income tax’ article would be in the table, as:

article_id

Tax codes

Emergency tax

Tax numbers

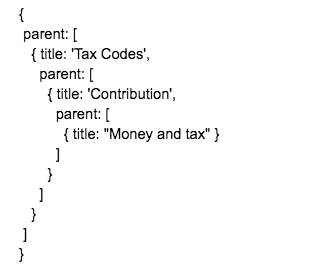

After all the dependencies are found, they are queued into a background job in the content-store application and all the relevant links are updated. The above example would be represented to the content-store as this blog of code:

More information on the specifics and examples of the actual code we use to find dependencies and update their representation can be found here:

Our GitHub writeup on dependency resolution

Code to update links in the content store

The PostgreSQL query example (used above)

You can follow Douglas on Twitter, sign up now for email updates from this blog or subscribe to the feed.

If this sounds like a good place to work, take a look at Working for GDS - we're usually in search of talented people to come and join the team.

1 comment

Comment by Theo Moorfield posted on

Very interesting, as a freelance web design at http://5fifty.co.uk/ this is definitely going to come in handy for a client I have on the go at the moment.

Thanks! I will be following this blog closely.