Last summer, the Government Digital Service (GDS) moved across London from Aviation House in Holborn to The White Chapel Building in Aldgate. There were many challenges with moving over 600 people, and the design and build of our internal IT network was one of the top priorities.

The GDS network team handled the building of the new network internally. This is a small team of 6 skilled people who design, build and operate the internal infrastructure. They make sure the network is resilient, efficient, secure and has minimal downtime.

Our network team carried out the architectural design, product selection, planning, build, test and operation, which helped us to keep costs down. So staff could focus on delivering public services with no interruption to their work, we wanted to make sure the transition was as smooth as possible.

We had to configure and setup the internal IT infrastructure, which included:

- the internet line

- networking - Access Points (APs), switches and firewalls

- server and storage

- printing

Preparing to install our new network

GDS staff hot desk and are heavy consumers of cloud services. More than 1,000 unique wireless devices connect daily, with an average of 17 terabytes of data every month so far this year. So, a robust and reliable internet connection, coupled with a fast wireless network, is critical to the everyday work of GDS. We had to make sure the new infrastructure met our needs and could scale as we grew.

We started planning in August 2016 and visited the new building almost a year before moving in. The goal was to:

- identify the office size

- plan where the internet line would come into the building

- see where to place the APs for the wireless network

Choosing our vendors for IT infrastructure

To spend our budget efficiently and get the best value for public money, we had a thorough evaluation process for vendors. We drafted requirements for each IT category and went out to market to find vendors. We used the Digital Marketplace and referred to Gartner's Magic Quadrant to find the best suppliers for our needs.

Vendors were invited to show us their products and we evaluated each one based on various factors including functionality and price. The process to find vendors took around 2 months. Once the evaluation process was complete, we started buying the hardware.

At this point we were under pressure to build the network quickly so GDS staff could start moving to the new building as early as possible. Our two-person team built and tested the network in one month, but ideally, we would have set aside 3 months for the building and testing phase.

The shorter window introduced a risk of overrunning, so being able to configure the kit at the old building allowed us to compress the build and test timeline.

We had the following equipment delivered to our old office:

- 2 wireless LAN controllers (WLCs)

- 1 firewall

- 1 server

We took delivery of 2 WLCs as it was the first time we were setting up physical WLC and we wanted to avoid any replication issues when setting up on site.

The firewall provides the core network settings such as DHCP, DNS, VLAN segmentation and UTM security features. Getting it set up also gave us a headstart when we arrived at the new office and reduced the risk of the project overrunning. The rest of the equipment was held at a warehouse until the new office was ready.

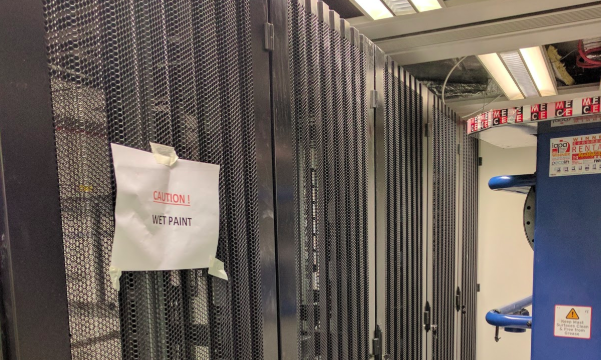

Setting up IT infrastructure at The Whitechapel Building

We worked with engineers to help install the internet line, this was followed by the Uninterruptible Power Supply devices and then the network and server kit. This took 2 weeks.

As the IT network aspect of the office move was handled by 2 of our team, we had to hire a contractor to cover business as usual activity at the old office while we set up the network at the new office.

At GDS we operate a wireless-first policy - as opposed to using hardwired connections - to promote flexible working. So, we planned a minimum of one network port per bank of desks. We believed these ports would only be used in times when the wireless connection could not provide sufficient bandwidth.

We carried out a wireless survey to help choose where best to place our 38 APs. We rolled out network cables from the server rooms out across the office floor and ceiling space and then installed the APs.

At this point we had hardware in place and powered on. But, we still needed to configure it, and with only weeks left to go it was a race against time to complete before GDS staff arrived. We faced a lot of challenges, from trivial matters such as empty cardboard boxes obstructing construction workers, to more technical blockers.

A big technical challenge was the network switches. We wanted to manage individual switches using one network interface, but we initially had issues grouping the hardware together. We found this problem was caused by a newer vendor operating system requiring a different method to set up the grouping of switches. This issue took us a few days to resolve.

Doing everything in-house meant the team that operates the service is the same team that actually built it. This means we can carry out network changes or respond to incidents much more efficiently.

Achieving our goal and lessons learnt

Our ultimate goal was to configure the network so when GDS staff walked into the new building, they could continue their work without interruption. Despite the technical challenges and time pressures, this is something that we achieved on schedule.

Staff arrived at the new office in July 2017, greeted by the unique open space and the IT infrastructure already in place. A number of GDS staff provided positive feedback about their IT experience.

As with any project there are always lessons our team can take away. With more time, we could have added the new kit to the monitoring and logging servers before staff arrived to the building. But when staff arrived, we were focused on everyday tasks, so it took a little longer to add all the new kit to the servers.

Regardless of this, for the team, the biggest satisfaction after all our hard work behind the scenes was seeing staff arrive, connect and continue to work seamlessly.

To make sure you stay up to date with all the latest developments, you can sign up to alerts from the Technology in government blog

.

3 comments

Comment by Malcolm Doody posted on

Great article, though seems a lot of rack space for one server, one firewall and a couple of WLC's ...

BTW, the gremlins have crept in: Universal Power Supply --> Uninterruptible Power Supply ??

Comment by Mohamed Hamid posted on

Hi Malcolm

Thanks for the comment, the kit you mention was the minimum required to allow us to make a start on the build before getting site access.

The network is dual-stacked including a test environment with two of the racks used by other teams. One of the racks is used for patch management and a portion of our network at the old office was migrated later on.

Comment by Egle posted on

Sometimes you don't realise the hard work going on behind the scenes. So thank you!